|

Size: 6766

Comment: add to CategoryAdvancedPageReplacement

|

← Revision 11 as of 2017-12-30 01:05:12 ⇥

Size: 6776

Comment: converted to 1.6 markup

|

| Deletions are marked like this. | Additions are marked like this. |

| Line 9: | Line 9: |

| attachment:hierarchy-cpucache.jpg | {{attachment:hierarchy-cpucache.jpg}} |

| Line 15: | Line 15: |

| attachment:secondlevel-cache.jpg | {{attachment:secondlevel-cache.jpg}} |

| Line 18: | Line 18: |

| [http://www.usenix.org/publications/library/proceedings/usenix01/zhou.html The Multi-Queue Replacement Algorithm for Second Level Buffer Caches]. Why this algorithm probably isn't very suitable as a first level cache replacement algorithm is left as an exercise to the reader :) | [[http://www.usenix.org/publications/library/proceedings/usenix01/zhou.html|The Multi-Queue Replacement Algorithm for Second Level Buffer Caches]]. Why this algorithm probably isn't very suitable as a first level cache replacement algorithm is left as an exercise to the reader :) |

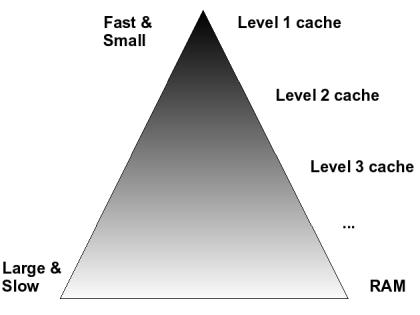

In order to understand the need for advanced page replacement algorithms, it is necessary to understand something about the memory hierarchy, access patterns and mixed workloads.

Memory Hierarchy

There are two types of memory hierarchy. The first one will be familiar to most people, it is the hierarchy of CPU caches, to random access memory (RAM). Caches near the top (level 1 or L1) are faster but smaller, while caches near the bottom (level 2 & 3, L2 / L3) are larger but slower. This provides a cost effective way to very quickly access a small set of data over and over again, while simultaneously being able to cache lots of data. The assumption is that the amount of data that a program accesses on very short time scales will fit in the L1 cache, and that that short term working set can slowly shift to other data.

This cache hierarchy is inclusive: every piece of data that is in L1 cache will also be in L2 cache and in memory; a line of L2 cache cannot be replaced while the corresponding data is still present in the L1 cache. When the L1 cache working set shifts back to a recent working set, chances are the data will still be in L2 cache and can be quickly loaded back into L1.

The second type of cache hierarchy is exclusive: a piece of data can be evicted from the second level cache, while it is still present in the first level cache. This is common when dealing with storage servers, like NFS or Samba servers and other NAS/SAN equipment. Typically the top level in this cache hierarchy will be the RAM on local workstations or computational nodes; the bottom level is the cache on a storage server.

Since the accesses (hits) on the data in the first level (local) cache are not visible to the storage server, the only cache hits the storage server sees are the misses of the first level cache. This means that there commonly is a very large distance between references. This means normal LRU will not be very effective and the storage server needs a smarter replacement algorithm.

More information on the problems LRU has with a second level cache can be found in this paper from the 2001 USENIX Annual Technical Conference by Zhou, Philbin and Li: The Multi-Queue Replacement Algorithm for Second Level Buffer Caches. Why this algorithm probably isn't very suitable as a first level cache replacement algorithm is left as an exercise to the reader ![]()

Access Patterns

There are several types of access patterns that invalidated one of the basic premises behind LRU.

Streaming IO

Streaming IO is a common phenomenon, on file servers serving whole files to client machines, in multimedia applications, decision making and data mining applications, while making backups, etc...

Streaming IO invalidates the LRU premise that "a page that was recently accessed will probably be accessed again soon". In streaming media, the page that will be accessed next typically is a page that has not yet been accessed, and the page that was referenced last won't be accessed again, at least not for a very long time. The worst problem is that classical LRU and clock replacement will evict exactly the wrong pages: the pages that do not (yet) have the accessed bit set, ie. the pages that will be accessed next!

In order to cope with this kind of usage, the replacement algorithm needs to be scan resistant.

Garbage collection

Garbage collection means that programs do not explicitly free memory they no longer use, and will not reuse memory quickly after last having used it. Furthermore, the garbage collection code can sweep over large areas of memory and can have an access pattern completely different from the rest of the program. The VM will have to cope somehow...

Interactive programs

Computers are fast, users are slow - but they expect computers to be fast! It is common for one user program (eg. a web browser) to sit idle while the user uses another application (eg. watching a movie or searching through lots of email). When the user goes back to the web browser, the user expects the web browser to still be in memory. After all, the other data that the user accessed won't be accessed again for quite a while, so why swap something else out to cache that data?

In order to cope with this kind of usage, the replacement algorithm needs to be scan resistant.

File server / secondary cache

On file servers, the client systems will cache the most frequently accessed pages. This means that the page that was accessed most recently on the file server will probably not be accessed again for a very long time, since it lives in the cache on the client machine.

This means that the time between two accesses to the same page on the file server will probably be a whole lot longer than on client systems. In fact, in the time between two accesses to the same page, chances are so many other pages will be accessed that the page will have been evicted from the cache (if LRU were used) on the file server.

In order to detect which pages are accessed most frequently on the file server, the VM may need to remember some information about recently evicted pages, like described in NonResidentPages. Once the VM can distinguish which pages are the most frequently accessed ones, it can cache those instead of having them flushed out of the cache by others.

Note that the pages that are most frequently accessed on the file server can be different from those that are most frequently accessed on the clients. In fact, ideally the file server and the clients would cache different pages, so the file server cache can complement the client caches, instead of duplicating them.

Mixed Workloads

Computers typically run a mix of the programs above. The VM will somehow have to balance memory use between these different programs and keep performance and responsiveness for all programs at acceptable levels.

Graceful Degradation

When a system crosses the border from running under capacity to being overloaded, performance should degrade gracefully, instead of the system suddenly hitting a wall and grinding to a halt. In order for graceful degradation to work, we not only need some kind of load control or artificially introduced unfairness (we know how to do that), but we also need to be able to reliably detect when the system crosses this boundary. This detection might need to be integrated with the page replacement mechanism...

Also see AdvancedPageReplacement or other pages in CategoryAdvancedPageReplacement